This HTML version of

You might prefer to read the PDF version, or you can buy a hard copy from Amazon.

Chapter 9 Differentiation and Integration

This chapter picks up where the previous chapter left off, looking at the relationship between windows in the time domain and filters in the frequency domain.

In particular, we’ll look at the effect of a finite difference window, which approximates differentiation, and the cumulative sum operation, which approximates integration.

The code for this chapter is in chap09.ipynb, which is in the repository for this book (see Section 0.2). You can also view it at http://tinyurl.com/thinkdsp09.

9.1 Finite differences

In Section 8.1, we applied a smoothing window to the stock price of Facebook and found that a smoothing window in the time domain corresponds to a low-pass filter in the frequency domain.

In this section, we’ll look at daily price changes and see that computing the difference between successive elements, in the time domain, corresponds to a high-pass filter.

Here’s the code to read the data, store it as a wave, and compute its spectrum.

import pandas as pd

names = ['date', 'open', 'high', 'low', 'close', 'volume']

df = pd.read_csv('fb_1.csv', header=0, names=names)

ys = df.close.values[::-1]

close = thinkdsp.Wave(ys, framerate=1)

spectrum = close.make_spectrum()

This example uses Pandas to read the CSV file; the result is a DataFrame, df, with columns for the opening price, closing price, and high and low prices. I select the closing prices and save them in a Wave object. The framerate is 1 sample per day.

Figure 9.1 shows this time series and its spectrum. Visually, the time series resembles Brownian noise (see Section 4.3). And the spectrum looks like a straight line, albeit a noisy one. The estimated slope is -1.9, which is consistent with Brownian noise.

Figure 9.1: Daily closing price of Facebook and the spectrum of this time series.

Now let’s compute the daily price change using np.diff:

diff = np.diff(ys)

change = thinkdsp.Wave(diff, framerate=1)

change_spectrum = change.make_spectrum()

Figure 9.2 shows the resulting wave and its spectrum. The daily changes resemble white noise, and the estimated slope of the spectrum, -0.06, is near zero, which is what we expect for white noise.

Figure 9.2: Daily price change of Facebook and the spectrum of this time series.

9.2 The frequency domain

Computing the difference between successive elements is the same as convolution with the window [1, -1]. If the order of those elements seems backward, remember that convolution reverses the window before applying it to the signal.

We can see the effect of this operation in the frequency domain by computing the DFT of the window.

diff_window = np.array([1.0, -1.0])

padded = thinkdsp.zero_pad(diff_window, len(close))

diff_wave = thinkdsp.Wave(padded, framerate=close.framerate)

diff_filter = diff_wave.make_spectrum()

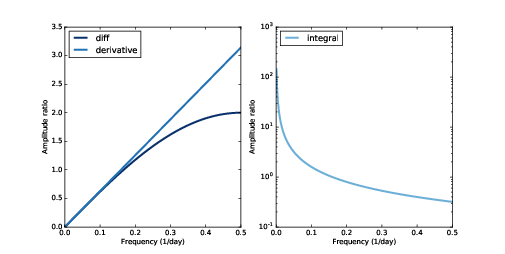

Figure 9.3: Filters corresponding to the diff and differentiate operators (left) and integration operator (right, log-y scale).

Figure 9.3 shows the result. The finite difference window corresponds to a high pass filter: its amplitude increases with frequency, linearly for low frequencies, and then sublinearly after that. In the next section, we’ll see why.

9.3 Differentiation

The window we used in the previous section is a numerical approximation of the first derivative, so the filter approximates the effect of differentiation.

Differentiation in the time domain corresponds to a simple filter in the frequency domain; we can figure out what it is with a little math.

Suppose we have a complex sinusoid with frequency f:

| Ef(t) = e2 π i f t |

The first derivative of Ef is

| Ef(t) = 2 π i f e2 π i f t |

which we can rewrite as

| Ef(t) = 2 π i f Ef(t) |

In other words, taking the derivative of Ef is the same as multiplying by 2 π i f, which is a complex number with magnitude 2 π f and angle π/2.

We can compute the filter that corresponds to differentiation, like this:

deriv_filter = close.make_spectrum()

deriv_filter.hs = PI2 * 1j * deriv_filter.fs

I started with the spectrum of close, which has the right size and framerate, then replaced the hs with 2 π i f. Figure 9.3 (left) shows this filter; it is a straight line.

As we saw in Section 7.4, multiplying a complex sinusoid by a complex number has two effects: it multiplies the amplitude, in this case by 2 π f, and shifts the phase offset, in this case by π/2.

If you are familiar with the language of operators and eigenfunctions, each Ef is an eigenfunction of the differentiation operator, with the corresponding eigenvalue 2 π i f. See http://en.wikipedia.org/wiki/Eigenfunction.

If you are not familiar with that language, here’s what it means:

- An operator is a function that takes a function and returns another function. For example, differentiation is an operator.

- A function, g, is an eigenfunction of an operator, A, if applying A to g has the effect of multiplying the function by a scalar. That is, A g = λ g.

- In that case, the scalar λ is the eigenvalue that corresponds to the eigenfunction g.

- A given operator might have many eigenfunctions, each with a corresponding eigenvalue.

Because complex sinusoids are eigenfunctions of the differentiation operator, they are easy to differentiate. All we have to do is multiply by a complex scalar.

For signals with more than one component, the process is only slightly harder:

- Express the signal as the sum of complex sinusoids.

- Compute the derivative of each component by multiplication.

- Add up the differentiated components.

If that process sounds familiar, that’s because it is identical to the algorithm for convolution in Section 8.6: compute the DFT, multiply by a filter, and compute the inverse DFT.

Spectrum provides a method that applies the differentiation filter:

# class Spectrum:

def differentiate(self):

self.hs *= PI2 * 1j * self.fs

We can use it to compute the derivative of the Facebook time series:

deriv_spectrum = close.make_spectrum()

deriv_spectrum.differentiate()

deriv = deriv_spectrum.make_wave()

Figure 9.4: Comparison of daily price changes computed by np.diff and by applying the differentiation filter.

Figure 9.4 compares the daily price changes computed by np.diff with the derivative we just computed. I selected the first 50 values in the time series so we can see the differences more clearly.

The derivative is noisier, because it amplifies the high frequency components more, as shown in Figure 9.3 (left). Also, the first few elements of the derivative are very noisy. The problem there is that the DFT-based derivative is based on the assumption that the signal is periodic. In effect, it connects the last element in the time series back to the first element, which creates artifacts at the boundaries.

To summarize, we have shown:

- Computing the difference between successive values in a signal can be expressed as convolution with a simple window. The result is an approximation of the first derivative.

- Differentiation in the time domain corresponds to a simple filter in the frequency domain. For periodic signals, the result is the first derivative, exactly. For some non-periodic signals, it can approximate the derivative.

Using the DFT to compute derivatives is the basis of spectral methods for solving differential equations (see http://en.wikipedia.org/wiki/Spectral_method).

In particular, it is useful for the analysis of linear, time-invariant systems, which is coming up in Chapter 10.

9.4 Integration

Figure 9.5: Comparison of the original time series and the integrated derivative.

In the previous section, we showed that differentiation in the time domain corresponds to a simple filter in the frequency domain: it multiplies each component by 2 π i f. Since integration is the inverse of differentiation, it also corresponds to a simple filter: it divides each component by 2 π i f.

We can compute this filter like this:

integ_filter = close.make_spectrum()

integ_filter.hs = 1 / (PI2 * 1j * integ_filter.fs)

Figure 9.3 (right) shows this filter on a log-y scale, which makes it easier to see.

Spectrum provides a method that applies the integration filter:

# class Spectrum:

def integrate(self):

self.hs /= PI2 * 1j * self.fs

We can confirm that the integration filter is correct by applying it to the spectrum of the derivative we just computed:

integ_spectrum = deriv_spectrum.copy()

integ_spectrum.integrate()

But notice that at f=0, we are dividing by 0. The result in NumPy is NaN, which is a special floating-point value that represents “not a number”. We can partially deal with this problem by setting this value to 0 before converting the spectrum back to a wave:

integ_spectrum.hs[0] = 0

integ_wave = integ_spectrum.make_wave()

Figure 9.5 shows this integrated derivative along with the original time series. They are almost identical, but the integrated derivative has been shifted down. The problem is that when we clobbered the f=0 component, we set the bias of the signal to 0. But that should not be surprising; in general, differentiation loses information about the bias, and integration can’t recover it. In some sense, the NaN at f=0 is telling us that this element is unknown.

Figure 9.6: A sawtooth wave and its spectrum.

If we provide this “constant of integration”, the results are identical, which confirms that this integration filter is the correct inverse of the differentiation filter.

9.5 Cumulative sum

In the same way that the diff operator approximates differentiation, the cumulative sum approximates integration. I’ll demonstrate with a Sawtooth signal.

signal = thinkdsp.SawtoothSignal(freq=50)

in_wave = signal.make_wave(duration=0.1, framerate=44100)

Figure 9.6 shows this wave and its spectrum.

Wave provides a method that computes the cumulative sum of a wave array and returns a new Wave object:

# class Wave:

def cumsum(self):

ys = np.cumsum(self.ys)

ts = self.ts.copy()

return Wave(ys, ts, self.framerate)

We can use it to compute the cumulative sum of in_wave:

out_wave = in_wave.cumsum()

out_wave.unbias()

Figure 9.7: A parabolic wave and its spectrum.

Figure 9.7 shows the resulting wave and its spectrum. If you did the exercises in Chapter 2, this waveform should look familiar: it’s a parabolic signal.

Comparing the spectrum of the parabolic signal to the spectrum of the sawtooth, the amplitudes of the components drop off more quickly. In Chapter 2, we saw that the components of the sawtooth drop off in proportion to 1/f. Since the cumulative sum approximates integration, and integration filters components in proportion to 1/f, the components of the parabolic wave drop off in proportion to 1/f2.

We can see that graphically by computing the filter that corresponds to the cumulative sum:

cumsum_filter = diff_filter.copy()

cumsum_filter.hs = 1 / cumsum_filter.hs

Because cumsum is the inverse operation of diff, we

start with a copy of diff_filter, which is the filter

that corresponds to the diff operation, and then invert the

hs.

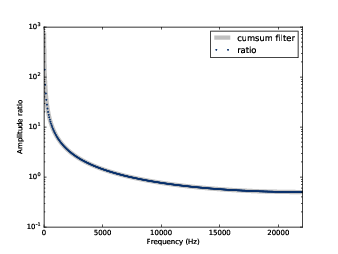

Figure 9.8: Filters corresponding to cumulative sum and integration.

Figure 9.8 shows the filters corresponding to cumulative sum and integration. The cumulative sum is a good approximation of integration except at the highest frequencies, where it drops off a little faster.

To confirm that this is the correct filter for the cumulative

sum, we can compare it to the ratio of the spectrum

out_wave to the spectrum of in_wave:

in_spectrum = in_wave.make_spectrum()

out_spectrum = out_wave.make_spectrum()

ratio_spectrum = out_spectrum.ratio(in_spectrum, thresh=1)

Figure 9.9: Filter corresponding to cumulative sum and actual ratios of the before-and-after spectrums.

And here’s the method that computes the ratios:

def ratio(self, denom, thresh=1):

ratio_spectrum = self.copy()

ratio_spectrum.hs /= denom.hs

ratio_spectrum.hs[denom.amps < thresh] = np.nan

return ratio_spectrum

When denom.amps is small, the resulting ratio is noisy, so I set those values to NaN.

Figure 9.9 shows the ratios and the filter corresponding to the cumulative sum. They agree, which confirms that inverting the filter for diff yields the filter for cumsum.

Finally, we can confirm that the Convolution Theorem applies by applying the cumsum filter in the frequency domain:

out_wave2 = (in_spectrum * cumsum_filter).make_wave()

Within the limits of floating-point error, out_wave2 is

identical to out_wave, which we computed using cumsum, so

the Convolution Theorem works! But note that this demonstration only

works with periodic signals.

9.6 Integrating noise

In Section 4.3, we generated Brownian noise by computing the cumulative sum of white noise. Now that we understand the effect of cumsum in the frequency domain, we have some insight into the spectrum of Brownian noise.

White noise has equal power at all frequencies, on average. When we compute the cumulative sum, the amplitude of each component is divided by f. Since power is the square of magnitude, the power of each component is divided by f2. So on average, the power at frequency f is proportional to 1 / f2:

| Pf = K / f2 |

where K is a constant that’s not important. Taking the log of both sides yields:

| logPf = logK − 2 logf |

And that’s why, when we plot the spectrum of Brownian noise on a log-log scale, we expect to see a straight line with slope -2, at least approximately.

In Section 9.1 we plotted the spectrum of closing prices for Facebook, and estimated that the slope is -1.9, which is consistent with Brownian noise. Many stock prices have similar spectrums.

When we use the diff operator to compute daily changes, we multiplied the amplitude of each component by a filter proportional to f, which means we multiplied the power of each component by f2. On a log-log scale, this operation adds 2 to the slope of the power spectrum, which is why the estimated slope of the result is near 0.1 (but a little lower, because diff only approximates differentiation).

9.7 Exercises

The notebook for this chapter is chap09.ipynb. You might want to read through it and run the code.

Solutions to these exercises are in chap09soln.ipynb.

Plot the filters that corresponds to the 2nd difference and the 2nd derivative and compare them. Hint: In order to get the filters on the same scale, use a wave with framerate 1.